Despite the substantial progress in text-to-image (T2I) generation brought about by models such as DALL-E 3, Imagen 3, and Stable Diffusion 3, achieving consistent output quality — both in aesthetic and alignment terms — remains a persistent challenge. While large-scale pretraining provides general knowledge, it is insufficient to achieve high aesthetic quality and alignment. Supervised fine-tuning (SFT) serves as a critical post-training step but its effectiveness is strongly dependent on the quality of the fine-tuning dataset.

Current public datasets used in SFT either target narrow visual domains (e.g., anime or specific art genres) or rely on basic heuristic filters over web-scale data. Human-led curation is expensive, non-scalable, and frequently fails to identify samples that yield the greatest improvements. Moreover, recent T2I models use internal proprietary datasets with minimal transparency, limiting the reproducibility of results and slowing collective progress in the field.

Approach: A Model-Guided Dataset Curation

To mitigate these issues, Yandex have released Alchemist, a publicly available, general-purpose SFT dataset composed of 3,350 carefully selected image-text pairs. Unlike conventional datasets, Alchemist is constructed using a novel methodology that leverages a pre-trained diffusion model to act as a sample quality estimator. This approach enables the selection of training data with high impact on generative model performance without relying on subjective human labeling or simplistic aesthetic scoring.

Alchemist is designed to improve the output quality of T2I models through targeted fine-tuning. The release also includes fine-tuned versions of five publicly available Stable Diffusion models. The dataset and models are accessible on Hugging Face under an open license. More about the methodology and experiments — in the preprint .

Technical Design: Filtering Pipeline and Dataset Characteristics

The construction of Alchemist involves a multi-stage filtering pipeline starting from ~10 billion web-sourced images. The pipeline is structured as follows:

Initial Filtering: Removal of NSFW content and low-resolution images (threshold >1024×1024 pixels).

Coarse Quality Filtering: Application of classifiers to exclude images with compression artifacts, motion blur, watermarks, and other defects. These classifiers were trained on standard image quality assessment datasets such as KonIQ-10k and PIPAL.

Deduplication and IQA-Based Pruning: SIFT-like features are used for clustering similar images, retaining only high-quality ones. Images are further scored using the TOPIQ model, ensuring retention of clean samples.

Diffusion-Based Selection: A key contribution is the use of a pre-trained diffusion model’s cross-attention activations to rank images. A scoring function identifies samples that strongly activate features associated with visual complexity, aesthetic appeal, and stylistic richness. This enables the selection of samples most likely to enhance downstream model performance.

Caption Rewriting: The final selected images are re-captioned using a vision-language model fine-tuned to produce prompt-style textual descriptions. This step ensures better alignment and usability in SFT workflows.

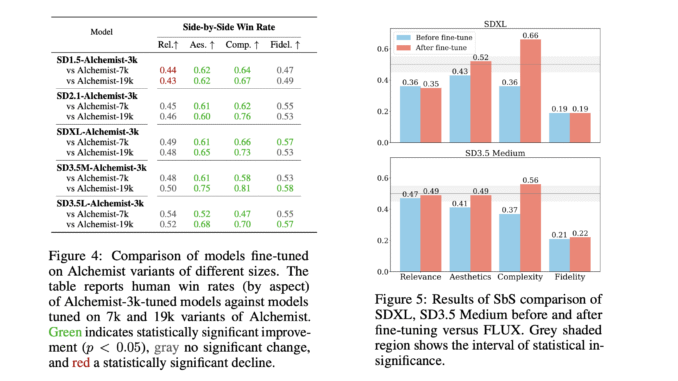

Through ablation studies, the authors determine that increasing the dataset size beyond 3,350 (e.g., 7k or 19k samples) results in lower quality of fine-tuned models, reinforcing the value of targeted, high-quality data over raw volume.

Results Across Multiple T2I Models

The effectiveness of Alchemist was evaluated across five Stable Diffusion variants: SD1.5, SD2.1, SDXL, SD3.5 Medium, and SD3.5 Large. Each model was fine-tuned using three datasets: (i) the Alchemist dataset, (ii) a size-matched subset from LAION-Aesthetics v2, and (iii) their respective baselines.

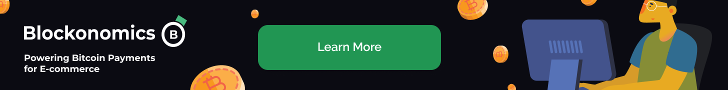

Human Evaluation: Expert annotators performed side-by-side assessments across four criteria — text-image relevance, aesthetic quality, image complexity, and fidelity. Alchemist-tuned models showed statistically significant improvements in aesthetic and complexity scores, often outperforming both baselines and LAION-Aesthetics-tuned versions by margins of 12–20%. Importantly, text-image relevance remained stable, suggesting that prompt alignment was not negatively affected.

Automated Metrics: Across metrics such as FD-DINOv2, CLIP Score, ImageReward, and HPS-v2, Alchemist-tuned models generally scored higher than their counterparts. Notably, improvements were more consistent when compared to size-matched LAION-based models than to baseline models.

Dataset Size Ablation: Fine-tuning with larger variants of Alchemist (7k and 19k samples) led to lower performance, underscoring that stricter filtering and higher per-sample quality is more impactful than dataset size.

Yandex has utilized the dataset to train its proprietary text-to-image generative model, YandexART v2.5, and plans to continue leveraging it for future model updates.

Conclusion

Alchemist provides a well-defined and empirically validated pathway to improve the quality of text-to-image generation via supervised fine-tuning.The approach emphasizes sample quality over scale and introduces a replicable methodology for dataset construction without reliance on proprietary tools.

While the improvements are most notable in perceptual attributes like aesthetics and image complexity, the framework also highlights the trade-offs that arise in fidelity, particularly for newer base models already optimized through internal SFT. Nevertheless, Alchemist establishes a new standard for general-purpose SFT datasets and offers a valuable resource for researchers and developers working to advance the output quality of generative vision models.

Check out the Paper here and Alchemist Dataset on Hugging Face. Thanks to the Yandex team for the thought leadership/ Resources for this article.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. His most recent endeavor is the launch of an Artificial Intelligence Media Platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news that is both technically sound and easily understandable by a wide audience. The platform boasts of over 2 million monthly views, illustrating its popularity among audiences.

Be the first to comment